Lately I have been thinking about how strange life can be. One chapter starts closing, and suddenly you can see the whole road behind you a little more clearly.

I have already shared that I am leaving TFL, and that has naturally pushed me into reflection. Not just on the last few years, but on more than 25 years professionally, and really close to 40 years since I first got my hands on a computer that felt like mine.

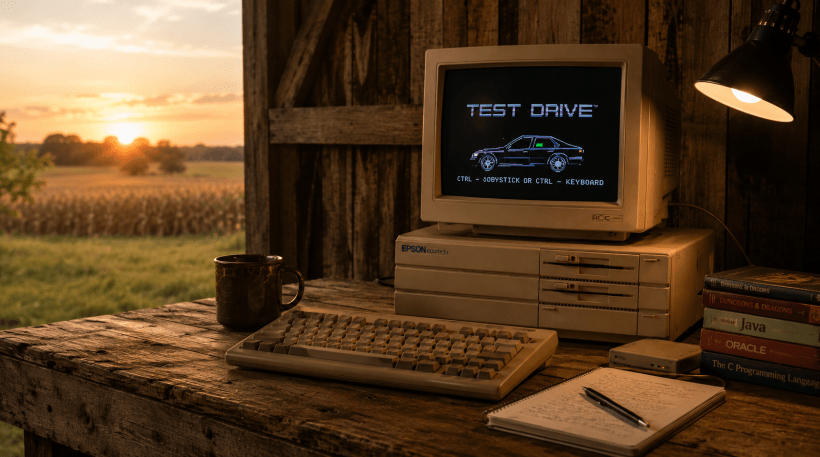

My first machine was an Epson Equity I+. Long before I had any clue where my career would go, I was already hooked. I still remember the first time my cousin and I got our computers talking over a modem from houses miles apart. At the time, it felt like magic. Then came BBS systems, early online experiences, and those first little windows into what a connected world could become.

Back then, limits were not frustrating. They were invitations.

When services charged by the email message, I remember trying to outthink the system. On Prodigy, one trick was sending mail to a dead end so it would bounce back, then sharing account credentials in chat so someone else could retrieve the returned message as a private message. Looking back, that probably told me something about myself pretty early on. I was not just interested in using systems. I wanted to understand them well enough to bend them.

Later came Windows 95 and my next computer. I really wanted a Gateway, but ended up with a Compaq Presario. That machine became a workshop. I hosted an FTP server and web server over my DSL line and built sites for local businesses. I started Compu-Doc and spent time in homes and small businesses fixing computers, setting up networks, and solving whatever problem was sitting on the desk in front of me.

That season taught me something important. I could do hardware and networking, but software was the thing that lit me up.

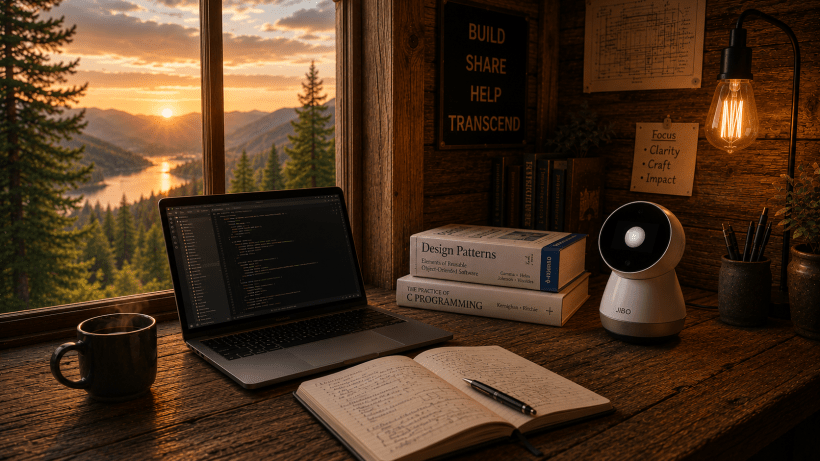

So I kept learning. Visual Basic. Java. C. COBOL. JCL. Oracle. College gave me the path, but curiosity supplied most of the fuel. I wanted to know how things worked, how they broke, and how they could be rebuilt better.

Then came Ticket Solutions, and the rest of the story started taking shape.

One of the most meaningful chapters was helping build what became the industry’s first real-time ticketing exchange. What makes that story more interesting to me is that I was not originally assigned to the project. At the time, I was building what we called Spinner software, which automated buying tickets on Ticketmaster. It was the wild west of online ticketing back then, long before the guardrails that exist today.

The other engineers were focused on ecommerce, POS, and the first exchange prototype. They took that prototype onsite to deploy it, and it did not work. When they got back, we regrouped and rebuilt it around ideas from chat server technology. More of a hub-and-spoke model, with clients connected to a central server through sockets so we could instantly access their databases when needed.

That system worked. We launched it. A few years later, patents followed. Then the technology and patents were sold to StubHub.

That was not the end. It was one bend in a long road.

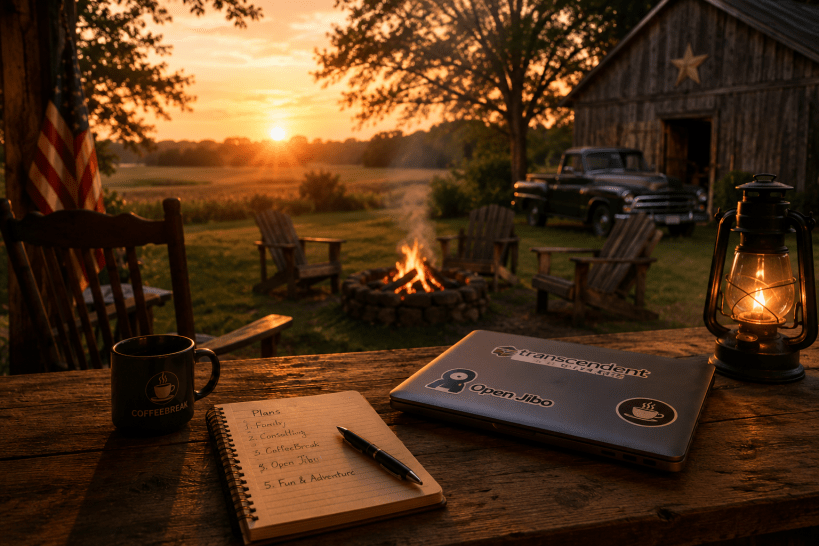

Over the years, we built more companies, more products, and more technology. In 2009, I founded Transcendent Software, as I saw a need to help more and more businesses with interesting technology problems, and I did, but my day job kept me quite busy, so expansion was not on the table, yet. With the family of companies I worked for, we explored neural networks before that was fashionable dinner-table conversation. We worked on advanced machine learning. I spent time with genetic algorithms and optimization problems, like taking traveling salesman ideas and applying them to real-world logistics. Some bets worked. Some did not. That is the nature of building. You plant a lot of seeds, and not every one becomes a tree.

In 2013, we pursued patents around technology for analyzing social media for signs of distress in kids. We believed parents would want alerts when something seemed wrong. We invested in it, did the work, and then shut it down after research suggested parents would not pay for it. I still sometimes wonder what would have happened if we had pivoted the use case instead of walking away. But every builder has a few doors in memory that never got opened all the way.

From there came logistics, data, and scaling VeriShip with a sharper focus on data science and contract negotiation. Then a return to Ticket Solutions full-time with an emphasis on process automation. Then COVID-19. Then Ticket Solutions being acquired by TFL.

And then another reinvention.

We helped take a company in TFL with very little technology muscle and turn it into a real technology-powered business. It worked. The company grew in big ways. And now here we are, with me stepping away from that chapter too.

Crazy.

When I look back, what stands out is not just the companies, patents, exits, or titles. It is the thread running through all of it. Curiosity. Building. Adapting. Looking at a system and believing there is probably a smarter way.

That part of me has not changed since the Epson days.

So where am I headed next? I am still a builder. Still a systems thinker. Still drawn to the space where software, data, intelligence, and practical business value meet. If anything, I trust experience more now than hype. I care more about what works, what lasts, and what actually helps people move forward.

I do not know exactly what the next chapter will look like yet.

But I know this much. I am still building.